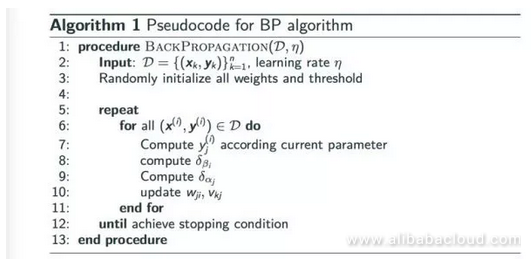

It is considered an efficient algorithm, and modern implementations take advantage of specialized GPUs to further improve performance.īackpropagation was invented in the 1970s as a general optimization method for performing automatic differentiation of complex nested functions.

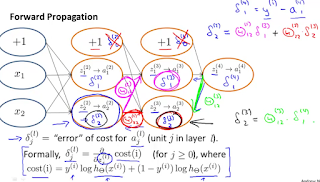

This backwards flow of the error information allows for efficient computation of the gradient at each layer versus the naive approach of calculating the gradient of each layer separately.īackpropagation's popularity has experienced a recent resurgence given the widespread adoption of deep neural networks for image recognition and speech recognition. Partial computations of the gradient from one layer are reused in the computation of the gradient for the previous layer. The "backwards" part of the name stems from the fact that calculation of the gradient proceeds backwards through the network, with the gradient of the final layer of weights being calculated first and the gradient of the first layer of weights being calculated last. It is a generalization of the delta rule for perceptrons to multilayer feedforward neural networks.

Given an artificial neural network and an error function, the method calculates the gradient of the error function with respect to the neural network's weights. Backpropagation, short for "backward propagation of errors," is an algorithm for supervised learning of artificial neural networks using gradient descent.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed